- HOW IT WORKS

How Cloud AI Manager Turns Connected Content Into Usable Answers

Connect

Index

Ask

Step 1: Multi-Source Content Ingestion

Structured Data Extraction

CRM fields, project metadata, user attributes are preserved for filtering and context

Unstructured Text Processing

Documents, Slack threads, and emails are parsed to extract readable text while preserving formatting

Permission Mapping

Access controls from source systems are mapped to Cloud AI Manager to ensure users only see content they're authorized to view

Incremental Updates

Only new or modified content is re-indexed, keeping your knowledge base current without full re-syncs

What Gets Indexed

Documents

Conversations

Structured Records

Web Content

RAG Pipeline Architecture

- Content chunked into semantic units (paragraphs, sections, records)

- Each chunk converted to vector embeddings using state-of-the-art models

- Vectors stored in high-performance index with metadata tags

- Keyword index built for hybrid search combining semantic and lexical retrieval

Step 2: Intelligent Indexing & Vector Storage

Semantic Chunking

Content is intelligently split based on meaning, not just character count, preserving context

Vector Embeddings

Each chunk becomes a high-dimensional vector that captures semantic meaning for similarity search

Metadata Enrichment

Source, timestamp, author, tags, and permissions attached to each indexed chunk

Hybrid Search Index

Combines vector similarity with keyword matching for best-of-both-worlds retrieval

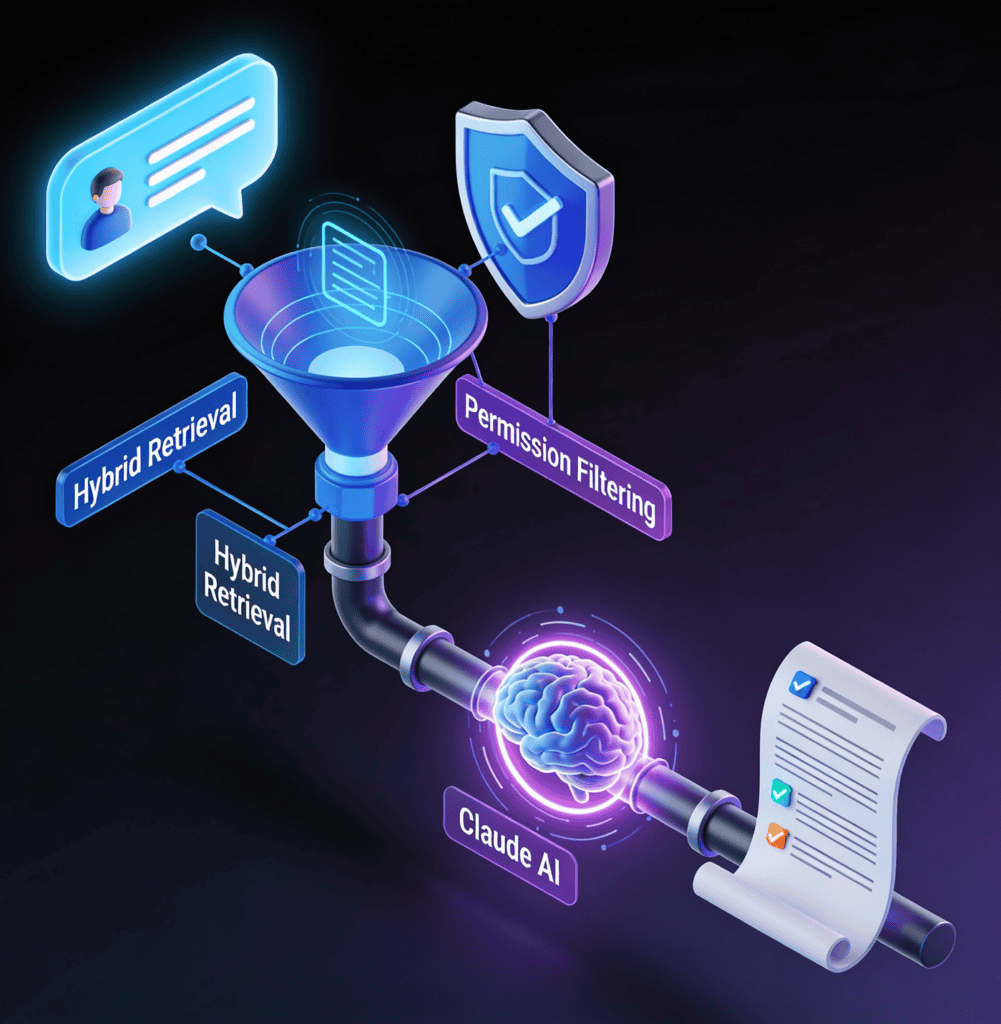

Step 3: Query & Retrieval-Augmented Generation

Query Understanding

Your question is analyzed for intent, entities, and context to guide retrieval

Hybrid Retrieval

Vector similarity search combined with keyword matching surfaces the most relevant chunks

Permission Filtering

Results automatically filtered based on your access rights — you only see what you're allowed to see

Context Assembly

Top-ranked chunks assembled into context with source attribution

Answer Generation

Claude AI synthesizes retrieved context into a natural language answer with inline citations

Why Retrieval-Augmented Generation?

What RAG Gets Right

- Answers grounded in your actual data, not training data from the internet

- Citations link back to source material so you can verify every claim

- Stays current as your data changes — no model retraining required

- Natural language understanding instead of rigid keyword matching

- Synthesizes information from multiple sources into coherent answers

What Traditional Search Can't Do

- Keyword search misses synonyms and related concepts

- Returns a list of links, not synthesized answers

- Can't combine information from multiple sources

- Requires exact term matching for relevance

- Users must read through multiple documents to find answers

Powered by Claude AI